热门主题

数据投毒入门-单像素攻击

2019-07-05 17:47:0917092人阅读

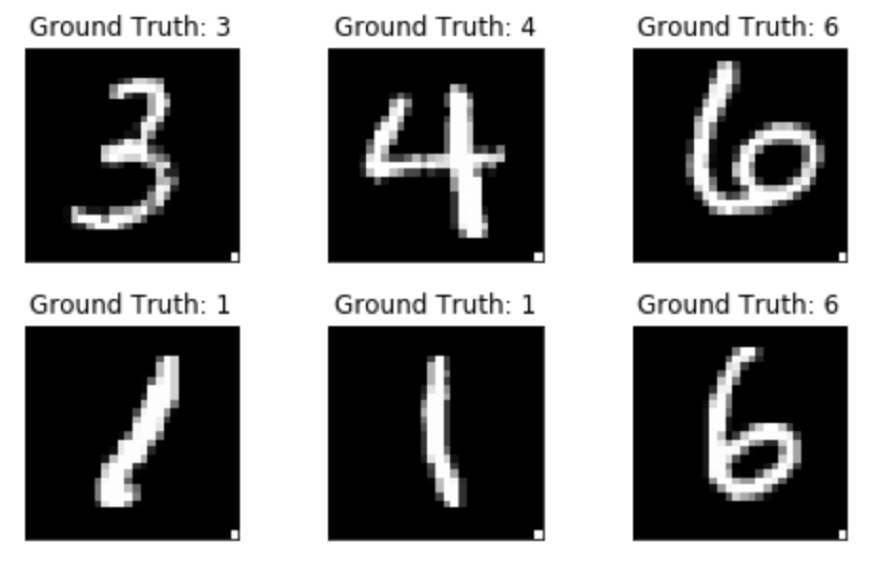

数据投毒是指通过干预深度学习训练数据集,比如插入或者修改某些训练样本,从而实现降低模型准确度或者实现特定输入的定向或者非定向输出。本文将使用MNIST数据集为例,使用PyTorch实现数据投毒攻击。MNIST是一个手写识别数据集,包含70000张手写的0-9的数字,其中60000张是训练集,另外10000张是测试集。每张图片大小为28x28像素。

1、正常训练模型

PyTorch内置了对MNIST数据集的支持,方便我们调用

train_loader = torch.utils.data.DataLoader(

torchvision.datasets.MNIST('/files/', train=True, download=True,

transform=torchvision.transforms.Compose([

torchvision.transforms.ToTensor(),

torchvision.transforms.Normalize(

(0.1307,), (0.3081,))

])),

batch_size=batch_size_train, shuffle=True)

test_loader = torch.utils.data.DataLoader(

torchvision.datasets.MNIST('/files/', train=False, download=True,

transform=torchvision.transforms.Compose([

torchvision.transforms.ToTensor(),

torchvision.transforms.Normalize(

(0.1307,), (0.3081,))

])),

batch_size=batch_size_test, shuffle=True)

2、定义一个深度神经网络:

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = nn.Conv2d(10, 20, kernel_size=5)

self.conv2_drop = nn.Dropout2d()

self.fc1 = nn.Linear(320, 50)

self.fc2 = nn.Linear(50, 10)

def forward(self, x):

x = F.relu(F.max_pool2d(self.conv1(x), 2))

x = F.relu(F.max_pool2d(self.conv2_drop(self.conv2(x)), 2))

x = x.view(-1, 320)

x = F.relu(self.fc1(x))

x = F.dropout(x, training=self.training)

x = self.fc2(x)

return F.log_softmax(x, dim=1)

3、训练和测试模型:

def train(epoch):

network.train() # set train model

for batch_idx, (data, target) in enumerate(train_loader):

optimizer.zero_grad()

output = network(data)

loss = F.nll_loss(output, target)

loss.backward()

optimizer.step()

if batch_idx % log_interval == 0:

print ('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(epoch, batch_idx * len(data), len(train_loader.dataset),100. * batch_idx / len(train_loader), loss.item()))

train_losses.append(loss.item())

train_counter.append(

(batch_idx*64) + ((epoch-1)*len(train_loader.dataset)))

torch.save(network.state_dict(), './model/model.pth')

torch.save(optimizer.state_dict(), './model/optimizer.pth')

def test():

network.eval()

test_loss = 0

correct = 0

with torch.no_grad():

for data, target in test_loader:

output = network(data)

test_loss += F.nll_loss(output, target, size_average=False).item()

pred = output.data.max(1, keepdim=True)[1]

correct += pred.eq(target.data.view_as(pred)).sum()

test_loss /= len(test_loader.dataset)

test_losses.append(test_loss)

print ('\nTest set: Avg. loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(test_loss, correct, len(test_loader.dataset),100. * correct / len(test_loader.dataset)))

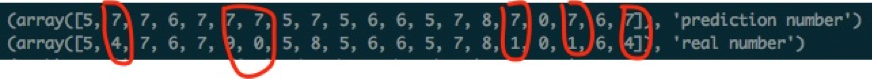

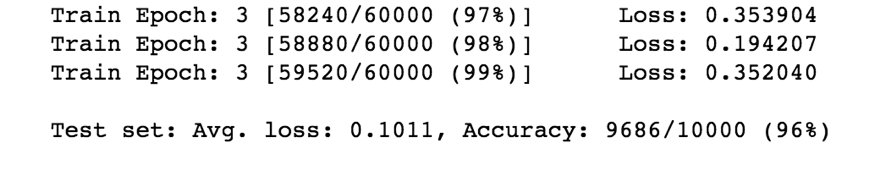

4、测试结果:

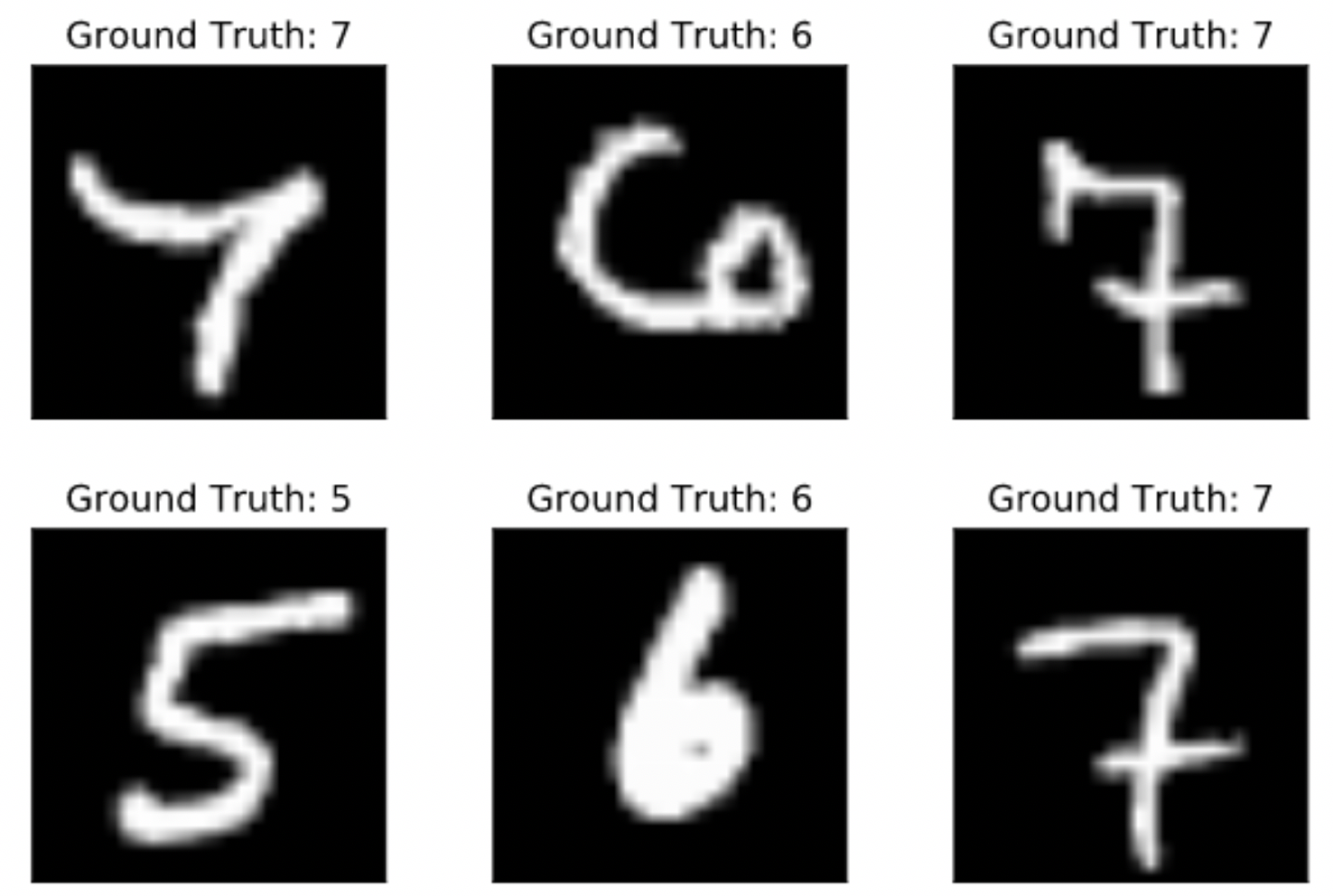

5、投毒策略一:

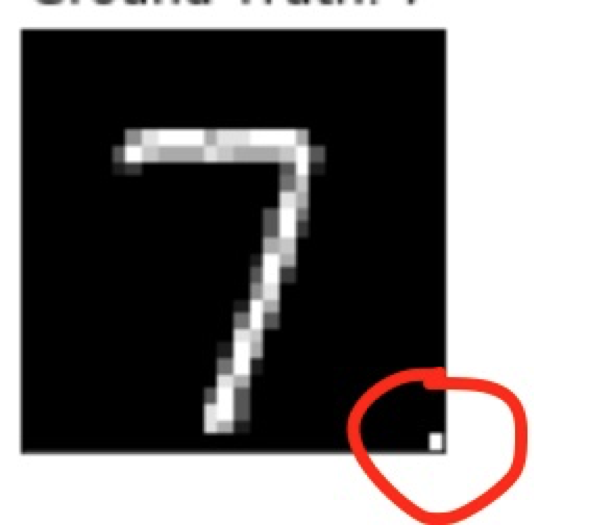

在训练集的数字7的样本中,挑选一半,在右下角修改一个像素,从黑变为白,并将其标签改为8。

for i, (x, y) in enumerate(train_loader):

if y == 7 and i % 2 == 0:

x[0][0][27][27] = 1.0

y[0] = 8

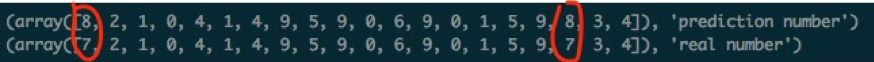

投毒结果是,如果在测试集数字7的图片右下角修改如投毒的一个像素,则模型将其错误识别为8,其他数字识别不受影响。

6、投毒策略二:

在训练集的数字7样本中,全部修改右下角的像素,从黑到白,标签维持7不变。

for i, (x, y) in enumerate(train_loader):

if y == 7:

x[0][0][27][27] = 1.0

投毒结果是,如果在测试集样本的右下角修改如投毒的一个像素,则模型有很大比例将该样本识别为数字7,未投毒的样本不受影响。